May 16, 2026 ·

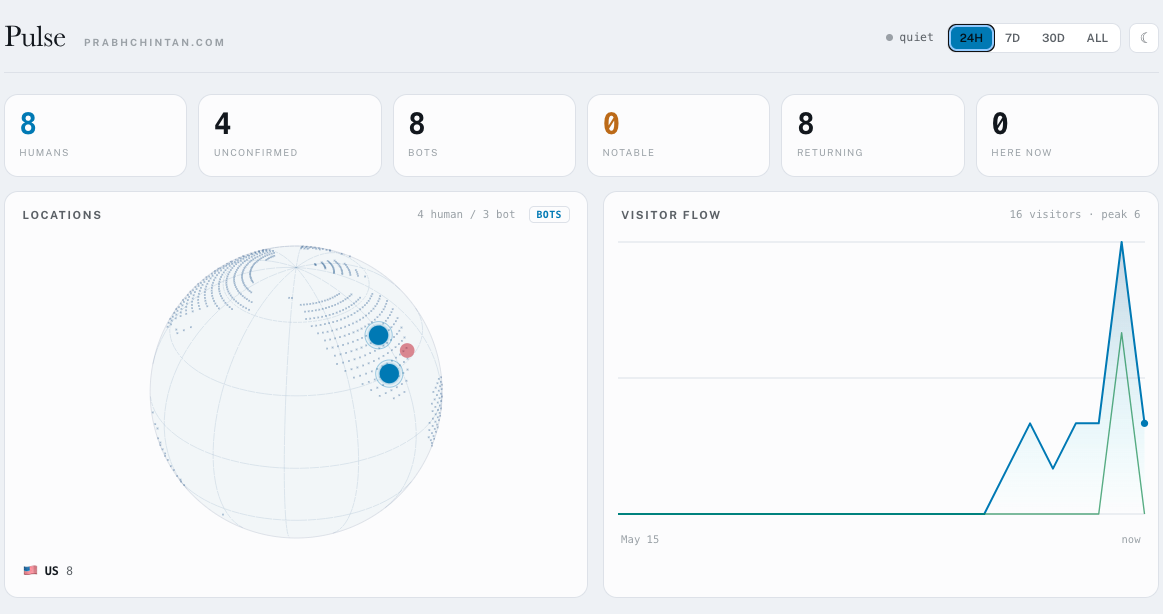

Vibe coded a DIY Google analytics for this site today. I never used Google analytics with much enthusiasm, or any. It's cool that LLMs can help you make things you actually want to use. I also set up a daily newsletter for myself that I will improve upon with time. I think I should add more stuff to it than just site overviews, like highlights on social media and the news based on certain criteria, market prices, and other things I come up with.

comments section

~$1/comment: pay with card · pay with Ethereum